- Show Menu

- Contact Us

- FAQs

- Reader Service

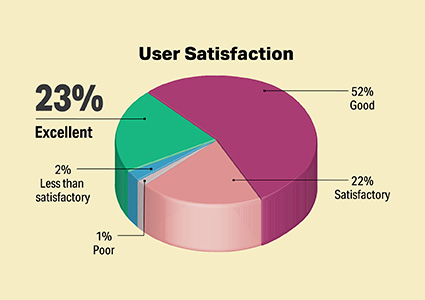

- Survey Data

- Survey Winners

- Testimonials

- Upcoming Events

- Webinars

Digital Pathology and Computational Learning

Category:

Digital Pathology

Click on a product name to expand the listing.

Click this icon to request more information on a product.

Click this icon to request more information on a product.

Login

Like what you've read? Please log in or create a free account to enjoy more of what www.medlabmag.com has to offer.

Recent Popular Articles

About Us

MedicalLab Management Ridgewood Medical Media, LLC

Quick Links

Subscribe to Our Email Newsletter!

© 2005 - 2024 MLM Magazine - MedicalLab Management.

All rights reserved.